By Lionel Snell,Editor, NetEvents

Ksenia Efimova, IDC’s Senior Research Analyst, EMEA Telecoms and

Networking discussed Architectural Management Trends and Next Generation

Data Centres at a recent EMEA NetEvents in Barcelona. She set

the scene by referring to the “Platform” model. The First Platform is the

mainframe computer, the Second Platform is client/server. “According to IDC, the

third platform consists of cloud, social, mobility and data;

and innovation accelerators, AR, VR, AI, robotics, block chain, et cetera” –

Fig 1.

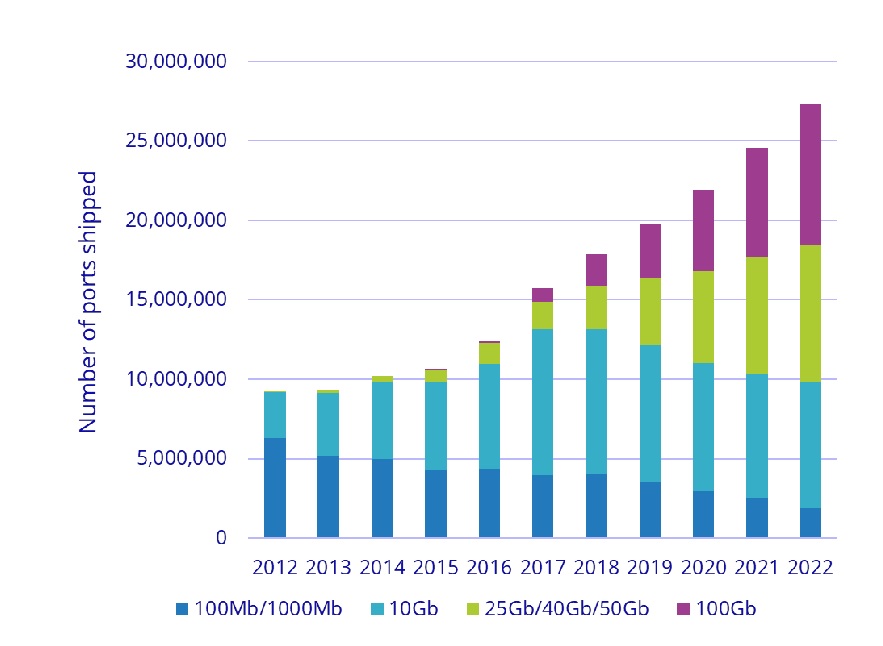

Ksenia Efimova pointed out that data is at the centre of digital transformation, and it’s complexity as well as volume is increasing: “What has to be done and has to be changed, is the management of the data, security of the data and the overall approach. Data centres are the tools to process the data and the data centres need to change and adapt.” How they can change? An obvious first answer is that speeds must increase. She showed a diagram of past and future expectations for sales of high-speed switches – Fig 2

“Several vendors have already

introduced 400 gigs, but it doesn’t mean that enterprises would go for that. Mostly

it’s going to be driven by the hyperscalers.”

But she emphasised that this increase of speed will not

solve all the problems, nor would

infrastructure rip and replace. What is needed is a whole new approach – to

storage, to servers, to the network, and to the system as a whole. For a start

we are increasingly up against energy consumption and the need for greater

efficiency. The complexity of managing a mix of on-premises, off-premises,

cloud-based, traditional data centres both private and public demands greater

automation and machine intelligence.

In that context, she opened

up the discussion with her panel from NetFoundry, Mellanox Technologies and

VMware by asking what, in their opinion, should be the very

first consideration – whether for the enterprise or hyperscale market – when

planning tomorrow’s 3rd Platform data centre?

Philip Griffiths , Head of EMEA Partnerships from NetFoundry sensibly pointed out that the first thing must be to clarify your strategy and objectives: “Are you looking to build a hyperscale data centre? Or just something for a local German subsidiary to keep data in-country? Or are looking for edge locations to reduce latency? Or IOT processing, where you might simply need a couple of blades or racks within a telco tower?”

That agreed, you should decide how to deliver what the customer wants allowing for the topics already mentioned – like reducing energy consumption, using automation and AI, having everything software-defined to save sending engineers onsite to fix things manually every time. “But really” he summarised, “the key first step is, what am I trying to achieve? Who

am I delivering this to? Where am I going to make money? Some of the data centre providers, Equinix for example, have repositioned themselves as mostly a connectivity company to different clouds, providing places where data’s relocating.”

Kevin Deierling, Chief Marketing Officer, Mellanox Technologies suggested a holistic approach: “Think outside the computer. In the old days you could count on Moore’s law doubling performance every couple of years – [the CPUs] could just absorb all the software and you just keep writing inefficient applications.” Referring to Martin Casado and VMware he added: “incredible technologies, but they can consume all of the CPU cycles”.

In the old days there was a Cisco router that forwarded packets using software: “Today we ship 100 gig, 400 gig switches: no network in the world can forward packets using software”. Instead you need a hybrid of ASIC hardware and software to accelerate virtual machine forwarding and firewall rules and load balancing etc. “When I first told Martin Casado… he was so excited. He goes: ‘you mean you put a virtual switch into silicon in an Ethernet NIC? … that’s fantastic. Too bad I can’t use it, because I’m VMware and I need to control that interface in order to have software control’. It was only then we explained that the control path is still controlled by the VMware.”

Policy decisions still need to be at key maintenance management – it’s just the data path that accelerates. “The intelligence still needs to be at that higher level; and really, what we like to think about is optimising at the data centre level… you’re not optimising at individual box levels, but across the entire platform – compute, storage, networking and application – think holistically. The data centre is the computer. Solve things at that level – rather than getting down into the weeds – and you’ll do much better”

Joe Baguley, Vice President and Chief Technology Officer for EMEA, VMware agreed about seeing the whole data centre as a single computer. Then: “ A lot of the customers we’re talking to aren’t building new data centres; they’re trying to get out of data centres… What they’re looking to do is to move into someone else’s data centre… or just move their VMs to one of the providers that hosts VMs”.

“Those that are building data centres are looking at GIFEE – Google Infrastructure For Everyone Else – asking how the hyperscalers build a data centre and “how can I do that myself?” Basically, everything’s done in software. “The customers that are building large scale data centres, they’re looking to rack essentially Lego building blocks of hyper-converged infrastructure plugged in via a 10 gig spine, which then goes 10/40, or even 40 to 100… the spine is just flat layer 2, because all the intelligence is done in software on the devices.”

That allows for a much more efficient use of hardware from an energy perspective. It also allows hardware what he called “a rolling death”. He explained how most businesses get cluttered with hardware bought over the years for some specific project – whereas a proper CloudScale operator on a software virtualised platform adds new hardware like Lego blocks onto racks. The most critical workloads automatically go onto the latest kit and so old hardware just works its way down the hierarchy to lower priority jobs until it falls away. Instead of all the peaks and troughs of hardware refreshing, this steady rolling process is far more efficient.

Baguely also sounded a caution about energy efficiency: “We have to be aware of Jevon’s paradox in that the more we make something efficient, the cheaper it eventually becomes to run, therefore the more people look for ways to use it, therefore we use more of it… Randomly claiming that using the cloud is energy efficient is a very, very long, dark road that you don’t want to go down”.

Ksenia Efimova asked him whether this hyper-converged approach suited everyone: “right now we see the migration of CRM systems, we see the migration of ERP systems to hyper-converged. So will that trend be continuous all the way to the end?”

Baguely replied: “Yes. If you can virtualise it, it will run on hyper-converged and you can pretty much virtualise anything… The barrier to HCI is very rarely anything to do with the apps or the software we’re trying to run; it’s the people not understanding how to take advantage of HCI. Because, if you’re using hyper-converged infrastructure and properly building a data centre as one big machine, there’s a whole bunch of people that need to give up their fiefdoms and understand they’re playing a bigger game. Networking, compute, storage – all one.”

Kevin Deierling pointed out how this was moving to the edge: “We have a SmartNIC that combines 25 gig, 100 gig networking connectivity with ARM cores for edge applications; and now that’s running ESXi. So we’re starting to see hypervisor running on these tiny little machines.” He referred to hyperconverged as “invisible infrastructure”: easy to deploy and it just worked – until you moved a VM and broke the network. But now, with SmartNIC intelligence in the network, when something moves there is a notification and the network adapts: “so now we’ve made the network invisible too”. He agreed that the remaining problems were behavioural.

This migration of intelligence towards the edge prompted further discussion. Philip Griffiths gave the 5G IoT example – how it was originally suggested that everything would connect to the cloud, but instead the move is to multi-tier architecture, with massive cloud data centre, then regional cloud data centres, and then edge on-premises ones and maybe even IoT gateways doing local processes. “We’re working with Dell and VMware on an IOT opportunity where we’re deploying onto gateways to provide that connectivity back into various levels of compute, because that traditional MPLS network – where you would have regional carriers connecting in all to one central point – that’s not possible. We’re pushing these data centres processing data absolutely everywhere”.

It was all sounding very positive, until Ksenia raised the problem of a global skill shortage. Deireling suggested that the hyperscalers are willing to spend time developing to save money, whereas enterprises spend money to save time: “they will actually pay up front to have somebody else manage them, and I think that’s a false trade-off… what they’ve bought is complexity. You think you’re getting a turnkey platform from Cisco and the complexity there is so high that you actually pay for managed services to manage all this stuff”.

For Baguely the key to hyperscale was automation: “Enterprisers have yet to wake up to the fact that automation is a fundamental design requirement, not a bolt on… I see these people building systems and then working out afterwards how to automate, as opposed to working out how to build an automated system – it’s the only way you get to scale”.

Ksenia’s final question was about marketing: “Who do you see as your partners when going to customers and saying, we can help you to transform your data centres?”

For Mellanox, Deierling said that they have been selling mostly to the hyperscale giants, and that is pretty straightforward. Dealing with a multivendor set up is harder – then VMware is a good partner. “Sometimes it’s just business challenges and we have lots of partners that come together because we’re optimising across the whole solution stack – the infrastructure layer, the application layer, networking, storage. Everything has to just be fully integrated. That’s the biggest challenge we face”.

For NetFoundry, Philip Griffiths, said they too had a lot of partners: “There’s many interesting cool things we can do, but ultimately you have to square it back to, what’s good to the business and how is this going to drive things forward?” It needs people with two skillsets: technology and customer relationships: “One, being able to drive the sale side of the business, the majority of IT businesses are built to drive profit. Two, having skilled people who bring together those and see the values in partnerships”.

VMware’s Joe Baguely said: “The challenge with most organisations is a certain narrow-mindedness, based on where they’ve come from. They might be experts in networking, so everything will be seen through the network lens. Or it will be through the storage lens, the compute lens, the managed service lens, or whichever they come from. It’s actually understanding that they have a broader viewpoint, or at least gathering them around a table to build something bigger and broader.

“At VMware we collected an incredibly diverse mind set to build this vision going forward. Because we had people like Martin Casado on the team to remind us to look at things differently. In the early days we were talking about VMs and one of the team said: ‘oh no – you should be really calling them containers’. Everyone was like, ‘containers?’ This is 2011. What are you on about? It was the guy that invented zones on Solaris, so he kind of knew what he was talking about… That then made us think, we’re not just talking about a future platform for VMs, it’s a future platform for Apps in containers; they just happen to be VMs right now and in the future they’re containers and next is unikernels and so on”.