Consequently, content moderation—the monitoring of UGC—is essential for online experiences. In his book Custodians of the Internet, sociologist Tarleton Gillespie writes that effective content moderation is necessary for digital platforms to function, despite the “utopian notion” of an open internet. “There is no platform that does not impose rules, to some degree—not to do so would simply be untenable,” he writes. “Platforms must, in some form or another, moderate: both to protect one user from another, or one group from its antagonists, and to remove the offensive, vile, or illegal—as well as to present their best face to new users, to their advertisers and partners, and to the public at large.”

Content moderation is used to address a wide range of content, across industries. Skillful content moderation can help organizations keep their users safe, their platforms usable, and their reputations intact. A best practices approach to content moderation draws on increasingly sophisticated and accurate technical solutions while backstopping those efforts with human skill and judgment.

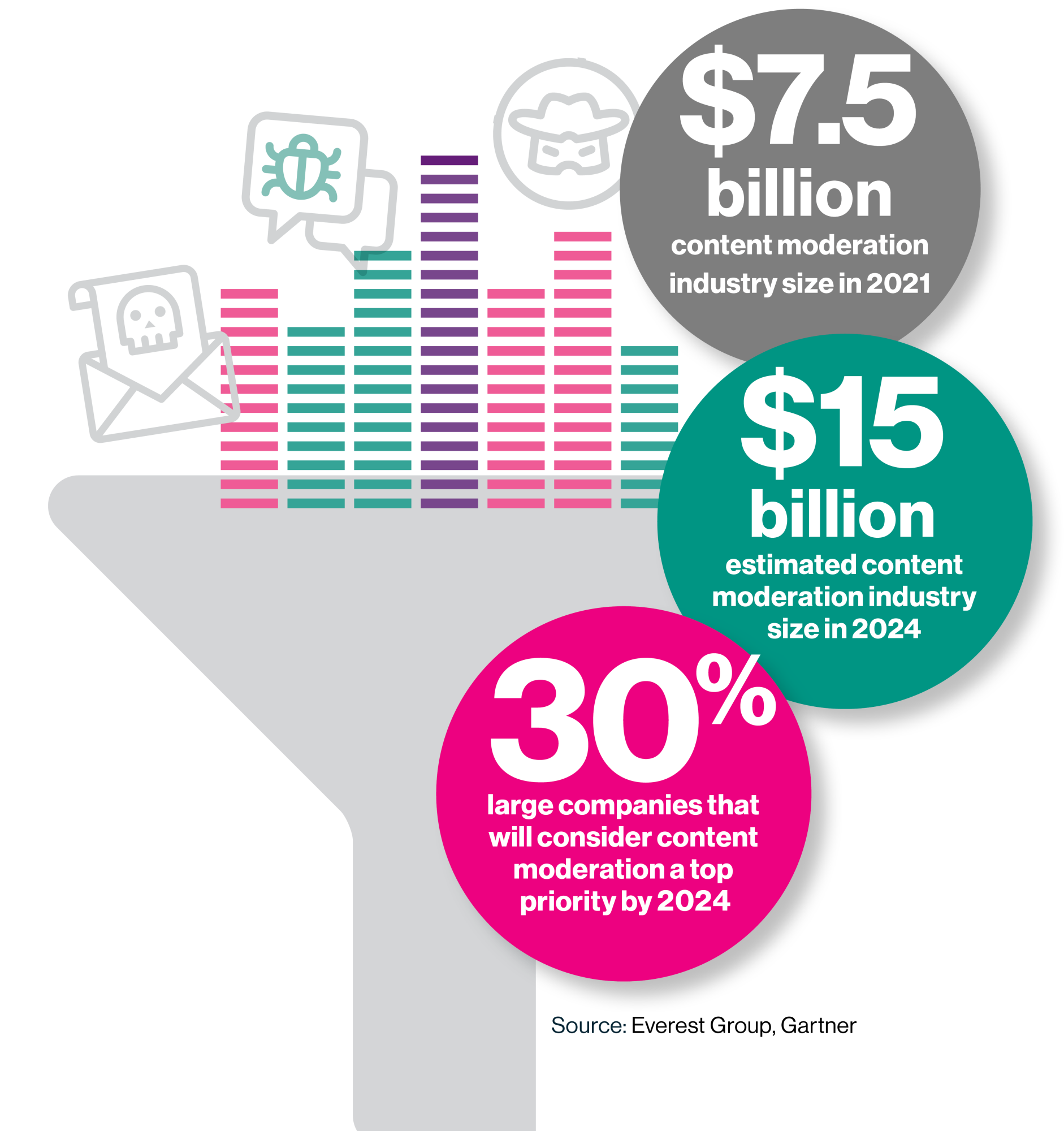

Content moderation is a rapidly growing industry, critical to all organizations and individuals who gather in digital spaces (which is to say, more than 5 billion people). According to Abhijnan Dasgupta, practice director specializing in trust and safety (T&S) at Everest Group, the industry was valued at roughly $7.5 billion in 2021—and experts anticipate that number will double by 2024. Gartner research suggests that nearly one-third (30%) of large companies will consider content moderation a top priority by 2024.

Content moderation: More than social media

Content moderators remove hundreds of thousands of pieces of problematic content every day. Facebook’s Community Standards Enforcement Report, for example, documents that in Q3 2022 alone, the company removed 23.2 million incidences of violent and graphic content and 10.6 million incidences of hate speech—in addition to 1.4 billion spam posts and 1.5 billion fake accounts. But though social media may be the most widely reported example, a huge number of industries rely on UGC—everything from product reviews to customer service interactions—and consequently require content moderation.

“Any site that allows information to come in that’s not internally produced has a need for content moderation,” explains Mary L. Gray, a senior principal researcher at Microsoft Research who also serves on the faculty of the Luddy School of Informatics, Computing, and Engineering at Indiana University. Other sectors that rely heavily on content moderation include telehealth, gaming, e-commerce and retail, and the public sector and government.

In addition to removing offensive content, content moderation can detect and eliminate bots, identify and remove fake user profiles, address phony reviews and ratings, delete spam, police deceptive advertising, mitigate predatory content (especially that which targets minors), and facilitate safe two-way communications

in online messaging systems. One area of serious concern is fraud, especially on e-commerce platforms. “There are a lot of bad actors and scammers trying to sell fake products—and there’s also a big problem with fake reviews,” says Akash Pugalia, the global president of trust and safety at Teleperformance, which provides non-egregious content moderation support for global brands. “Content moderators help ensure products follow the platform’s guidelines, and they also remove prohibited goods.”

This content was produced by Insights, the custom content arm of MIT Technology Review. It was not written by MIT Technology Review’s editorial staff.