In the new research, the Stanford team wanted to know if neurons in the motor cortex contained useful information about speech movements, too. That is, could they detect how “subject T12” was trying to move her mouth, tongue, and vocal cords as she attempted to talk?

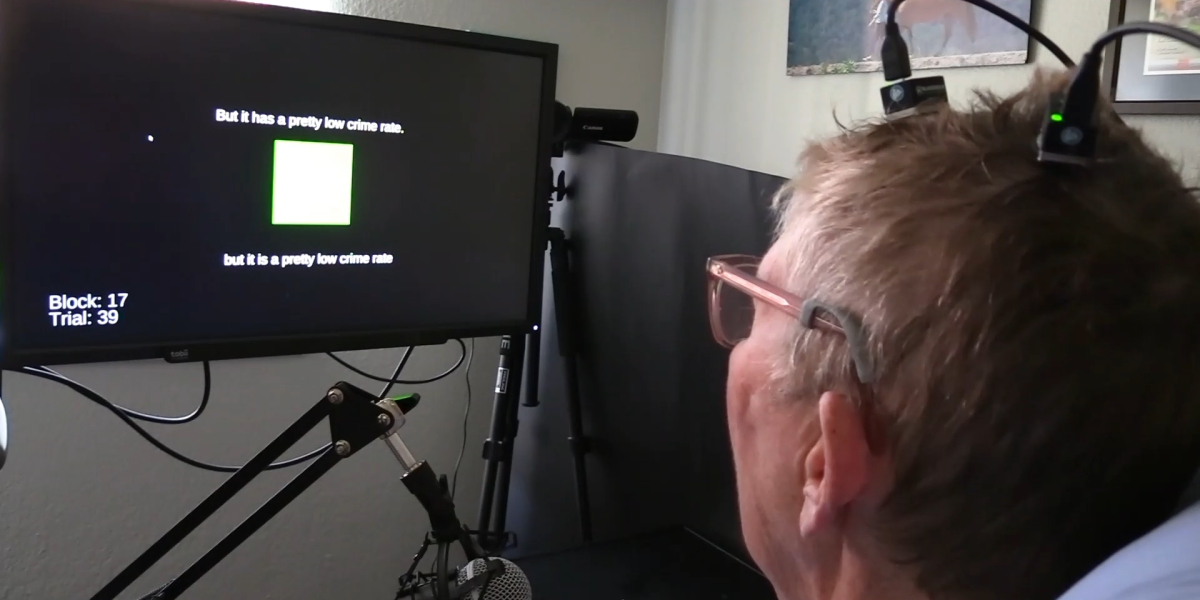

These are small, subtle movements, and according to Sabes, one big discovery is that just a few neurons contained enough information to let a computer program predict, with good accuracy, what words the patient was trying to say. That information was conveyed by Shenoy’s team to a computer screen, where the patient’s words appeared as they were spoken by the computer.

The new result builds on previous work by Edward Chang at the University of California, San Francisco, who has written that speech involves the most complicated movements people make. We push out air, add vibrations that make it audible, and form it into words with our mouth, lips, and tongue. To make the sound “f,” you put your top teeth on your lower lip and push air out—just one of dozens of mouth movements needed to speak.

A path forward

Chang previously used electrodes placed on top of the brain to permit a volunteer to speak through a computer, but in their preprint, the Stanford researchers say their system is more accurate and three to four times faster.

“Our results show a feasible path forward to restore communication to people with paralysis at conversational speeds,” wrote the researchers, who included Shenoy and neurosurgeon Jaimie Henderson.

David Moses, who works with Chang’s team at UCSF, says the current work reaches “impressive new performance benchmarks.” Yet even as records continue to be broken, he says, “it will become increasingly important to demonstrate stable and reliable performance over multi-year time scales.” Any commercial brain implant could have a difficult time getting past regulators, especially if it degrades over time or if the accuracy of the recording falls off.

WILLETT, KUNZ ET AL

The path forward is likely to include both more sophisticated implants and closer integration with artificial intelligence.

The current system already uses a couple of types of machine learning programs. To improve its accuracy, the Stanford team employed software that predicts what word typically comes next in a sentence. “I” is more often followed by “am” than “ham,” even though these words sound similar and could produce similar patterns in someone’s brain.